A Quick Reminder — Where We Left Off

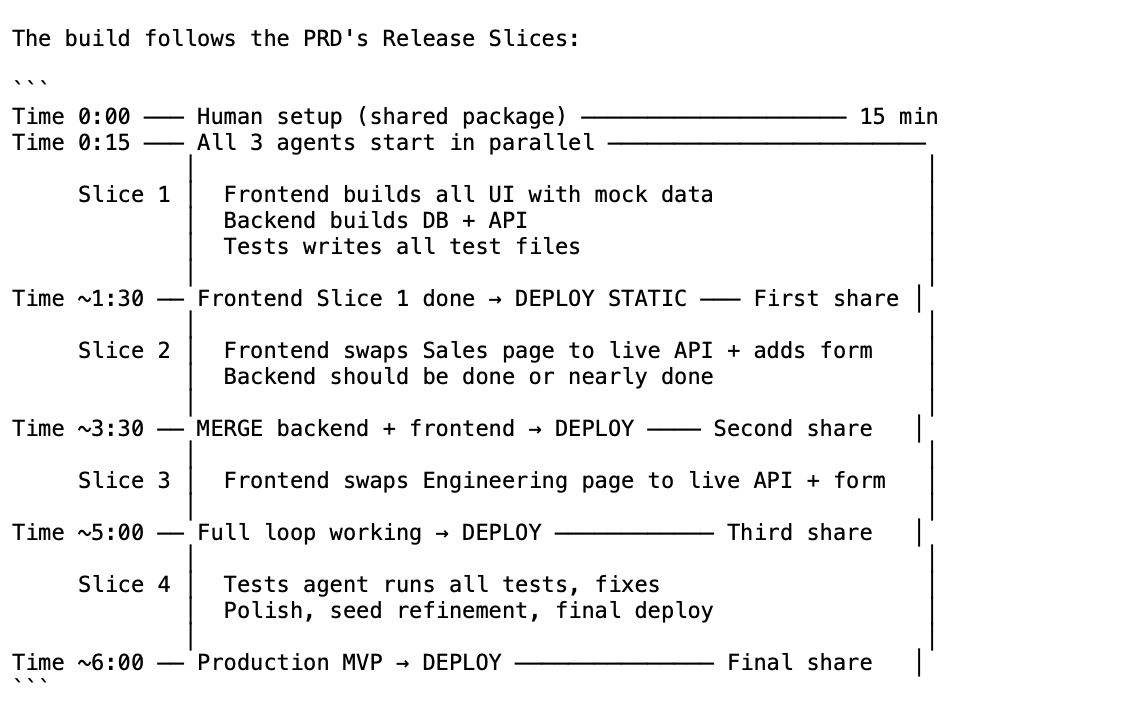

In the previous post I described how I planned a simulated AI-First company around a real product — BridgeBoard, which connects Sales and Engineering and shows how much revenue is at risk due to gaps in the Roadmap. We went through four planning stages: designing the experiment itself, building a PRD through conversation, architecture with AI-First considerations, and splitting the work into Agent Teams.

It all ended with tidy Markdown files — a spec, an architecture doc, and a detailed work order. Not a single line of code had been written.

Now? It was time to build.

The Moment the Team Starts Running

This was the moment I had been most looking forward to. I had a detailed plan, a clear division of work, and I wanted to see what would happen when a team of AI Agents received all of that and started building.

I opened Claude Code, gave it all the context — PRD, architecture, Work Tracks — and asked it to spin up a working team. What happened next surprised me.

Claude Code chose on its own to work with Agent Teams — splitting the work across multiple Agents running in parallel. It didn't wait for me to tell it to do that. It read the Work Tracks document, understood the three-track split (Backend, Frontend, Tests), and simply started organizing.

That's already an interesting point in its own right: when there's a clear division of work, does building an initial project actually require a team? Or would a single Agent with good instructions have done it more efficiently? I'm leaving that as an open question for now — because in the meantime, the team was running.

How I Actually Built the Team

Shared Context — Agent-Context.md

The first thing I did was create an Agent-Context.md file — a document attached to every Agent at the start of their work. It contains the work order, who does what, and where the boundaries are. Like a morning briefing for a real team, except everyone gets it before they start.

A Markdown File per Agent

For each Agent I created a separate Markdown file describing their role, team rules (what not to touch, which files to work in), personal rules (standards specific to their domain), and a detailed task list aligned with the architecture.

I simply told Claude Code: "Spin up a team — each one has clear instructions and shared context." And apart from a few moments where it stopped to ask me questions about dependencies or design decisions, everything ran.

What Happened When They Ran on Their Own

Let's talk about what's really interesting — what worked and what didn't.

What Worked

Work Planning is worth its weight in gold. Because there was upfront planning with clear instructions to minimize dependencies, the team was able to work in parallel without colliding. They shared Context but didn't wait on each other. That's exactly what I planned in the Work Tracks stage — and now I saw it actually happening.

Detailed instructions = more precise output. Each Agent knew exactly what to do. It wasn't just "build me a backend" — it was "build Route X with Schema Y according to the architecture in the Context." The more precisely tasks are described, leaving less room for interpretation, the more accurate the output that comes back. And it also saves tokens because the Agent spends less time guessing.

A Tests Agent as QA. The idea of designating an Agent whose entire role is to verify everything works end-to-end was excellent. It was responsible for running all the checks at the end, and if something wasn't working — it identified it, reported it, and the relevant Agent (or the "team lead") went back and fixed it. It's like having a real QA engineer sitting in the team and upholding the standard.

Self-correction of errors. While work was in progress there were errors — package conflicts, external interfaces not responding as expected, configuration that needed fixing. In Claude Code the Agent identifies the error, tries to resolve it, and usually succeeds. That removes a lot of the frustration a developer feels when installing packages or trying to stand up a dev environment. And of course you can always dive in, learn, and ask questions along the way.

What Didn't Work as Well

High token usage. When working with Agent Teams, token consumption is significantly higher (several times more). Each Agent consumes its Context separately, and the Orchestrator (team lead) consumes even more for coordination between them. In retrospect, if I had invested another hour up front preparing a Claude.md, Skills, and Hooks, I probably would have saved a lot of tokens later.

Claude chose Skills on its own. I gave the Agents precise definitions of what to do, but I didn't tell Claude Code itself which Skills to use. It chose what seemed right to it — and that wasn't always what I would have chosen. For example, if I had defined React and Node Skills tailored to the Vercel environment upfront, the generated code would likely have been better-fitted from the start.

Claude.md that wasn't prepared in advance. Just as I noted in the retro of the previous post — the decision to skip preparing a Claude.md came back to bite me. Clear instructions to Claude Code about how to work more precisely, what the standards are, and how to conserve resources — would have been worth the investment.

After the Team Finished — What's on the Floor?

Each of the Agents — Frontend, Backend, and Tests — ran through their tasks, documented their work, and didn't touch each other's files. When there were errors, the team lead knew to route back to the relevant Agent, and each one fixed its own issues. Some fixes the team lead took on itself.

In the end — without any intervention from me beyond planning and the occasional review — the project was running and working. User experience, database, APIs, and tests verifying that everything still works after changes.

Let's be honest — it's not perfect. There are places that need work, there's UI that could be improved, there are edge cases that weren't handled. But there's a working product, with business logic running, built in a few hours primarily by a team of Agents. And this was day one or two of the experiment.

It was a wild experience. Mainly because I felt my role had shifted. I wasn't sitting there writing code line by line. I was sitting, planning, giving instructions, checking results, and deciding what to change. Like a dev manager with a very fast team.

The Startup Lesson — Why It's Important to Stop and Invest

You could argue that to move fast and not slow down, I skipped things like Claude.md, Skills, Hooks, and MCP Tools — and that's true. That was the idea: a fast experiment, feel out what's missing.

But my intuition says something clearly: investing in those things pays off. And it's exactly like a real company. You move fast to reach customers, you cut corners on things that seem "nice to have," and then there's a moment when you realize that for higher quality and higher velocity — you need to stop and sort things out. Technical debt exists even when your team is made up of Agents.

The difference? In an AI-First world, "sorting things out" can take a few hours depending on what you want to add, not a full sprint. But it still requires the decision to do it. You can also add specific parts and timebox it to an hour or two until you see enough value to justify more investment.

Bottom Lines

1. Detailed Planning = A Smooth-Running Agent Team

The Work Tracks, the Shared Context, clear definitions for each Agent — all of it together allowed the team to run in parallel without me needing to intervene at every moment. The investment in the previous stage (planning) paid for itself on this day.

2. Agent Teams Aren't Perfect — And That's Fine

High token usage, automatic Skill selection that isn't always accurate, Context that can be optimized. All true. But the product works, and the lessons are clear for the next round.

3. A Tests Agent = A QA Agent Working 24/7

The idea of dedicating an Agent whose sole purpose is to verify the system works end-to-end is a pattern I'll keep repeating. It caught bugs I might have missed and forced the other Agents to fix things before the project was "done."

4. Your Role Changes — And Doesn't Stop Mattering

I barely wrote any code that day. But the planning, the reviews, the decisions about what to change and what to leave — all of that was critical. Without a Human In The Loop checking and navigating, the team could have built something that works technically but misses the intent.

5. Technical Debt Exists in an AI-First World Too

I skipped Claude.md, Skills, and Hooks. I paid for it in tokens and in precision. Just like a company that defers Technical Debt because "there's no time" — and then pays ten times more later.

What's Next?

Now that there's a working product, the next steps are starting to look like a real company:

Deploy — the product needs to go live. My thinking is Vercel since I already know the environment, with Supabase for the database. And there's already a new question there: would choosing a Deploy platform upfront have saved me problems?

Reviewer Team — before moving on to adding features, I want to run a round of specialist Sub-agents: Security Agent, Performance Agent, Test Coverage Agent, and Code Quality Agent. They'll all work as Reviewers, produce findings, and I'll decide what's important and what can wait. This is an approach that in a traditional company requires several people with different expertise — here you can run all of them in parallel.

PR and Code Review — figuring out what I as a Human want to check in the code, and what an Agent or another tool can do for me. An open question I find very interesting: how much of the code actually needs to be read when it's generated by AI? We need to choose between 100% review and trusting the tools with good tests — and I'm still forming my approach to that.

Real Scenarios — a Sales person arriving with a $2M ARR deal and pushing for a feature that doesn't exist, production Incidents that need handling, tickets from Support, requests from Product. Exactly like a real company that's running and discovers that the real world behaves differently from what was planned.

Things I Would Do Differently (Day 2 Retro)

Claude.md ready before you start building. This is the second time I'm writing this. Next round it happens first.

Define Skills upfront. Instead of letting Claude choose on its own, I'll define specific Skills upfront that match the project and the Deploy platform. An hour of prep that pays off significantly later.

Hooks as a standard. Capabilities like automatic checks before saving code, or instructions triggered under certain conditions — could have improved quality with no extra token cost.

MCP Tools. I didn't treat this as a requirement because I wanted to move fast, but MCP is already a standard. Worth checking what exists and factoring it in from the beginning.

Open question: Agent Teams vs. a single Agent. For a task like standing up an initial project, it's possible that a single Agent with good instructions would have reached a similar result with fewer tokens. Needs testing.

All of these go into the planning for the next stage. And that's exactly the beauty of it — every round teaches the next round to be sharper.

Want to implement an AI-First approach in your organization or team? I'd love a quick call — fill in your details and I'll get back to you.

Schedule a CallThis post is part of the AI-First Company experiment series I'm running and documenting in real time. Previous post — the planning stage. Follow me here and at chenfeldman.io for the full story.